DRP-AI TVM

The Renesas TVM is the Extension package of Apache TVM Deep Learning Complier for Renesas DRP-AI accelerators powered by EdgeCortix MERA™. The TVM is a software framework that translates Neural Networks to run on the Renesas MPUs. While the AI Translator (see section above) can translate ONNX models to the DRP-AI hardware, it is restricted by the supported AI operations. This can restrict the number of supported AI Models. The TVM Translator expands the number of supported AI models for the RZV processors (currently RZV2L, RZV2MA, RZV2M). The TVM translates ONNX models by delegating the generated output between the DRP-AI and CPU.

This is the TVM Software framework based on the Apache TVM. The TVM includes python support libraries, and sample scripts. The python scripts follow the Apache TVM framework API found here.

- The TVM Provides the following

- Wider supported range of AI Networks that can run on the DRP-AI and CPU.

- Translate AI models from ONNX files

- Translate AI models from PyTorch PT saved models. ( For other supported AI Software Frameworks see Apache TVM)

- Translate models to run on CPU only. This allows models to run on RZG.

Official TVM Translator Github repo

Installation Directions Readme

Current Supported Renesas MPUs

- Renesas RZ/V2L Evaluation Board Kit

- Renesas RZ/V2M Evaluation Board Kit

- Renesas RZ/V2MA Evaluation Board Kit

Generated Output

- DRP-AI + CPU

- CPU only

BSP Supported Versions

Required VLP, DRP-AI and DRP-AI Translator are listed below. Board Support Package requirements can be found on the TVM repo page here.

RZV2H AI SDK is the recommended BSP. It includes VLP and DRP-AI Driver.

| TVM | VLP (RZV2L) | VLP (RZV2M) | VLP (RZV2MA) | AI_SDK

( RZV2H ) |

DRP-AI Driver

RZVL,M.MA |

DRP-AI Translator

RZV2L,M,MA |

DRP-AI Translator i8

RZV2H |

|---|---|---|---|---|---|---|---|

| v2.2.1 | v3.0.4 or later | v3.0.4 or later | v3.0.4 or later | v3.0.0 | v7.40 or later | v1.84 | v1.01 |

| v2.2.0 | v3.0.4 or later | v3.0.4 or later | v3.0.4 or later | v3.0.0 | v7.40 or later | v1.83 | v1.01 |

| v2.1.0 | v3.0.4 or later | v3.0.4 | v3.0.4 | v3.0.0 | v7.40 or later | v1.82 | v1.01 |

| v1.1.1 | v3.0.4 | v3.0.4 | v3.0.4 | v7.40 | v1.82 | na | |

| v1,1.0 | v.3.0.2 | v1.3.0 update1 | v1.1.0 update1 | v7.30(V2L,M) v7.31(V2MA) | v1.82 | na | |

| v1.04 | v3.0.2 | v1.3.0 | v1.1.0 | v7.30 | v1.81 | na | |

| v1.03 | NS | NS | v1.0.0 | v7.20 | v1.80 | na |

Getting Started

Translate TVM Models

Application Files

A TVM application need the following files.

- Apache TVM Runtime Library

- Generated TVM files

- Preprocessor Binaries

- Preprocessing CPP files

- EdgeCortix MERA Wrapper CPP files

Apache TVM Runtime Library

This is the TVM runtime library. This a pre-compiled library for the RZV2M and RZV2MA BSP. It is included in the Renesas TVM repository.

Generated TVM files

The file that are generated using the tutorial scripts included in the repository.

Preprocessor Binaries

Neural Network require preprocessing of the input images before an inference can be run. This can involve resize, crop, and format conversion to match the input source to the expected inference input. In addition inference do not process RGB pixels instead the images must be converted to float and normalized. These preprocessing operation will increase the total inference time when done on the CPU. The Renesas TVM provides DRP-AI binaries to accelerate this process.

The DRP-AI Preprocessing library must operate as follows

- All preprocessing operations ( Total 6 ) need to be executed in sequence

- input image must be follows

- YUV Format

- Max Resolution : 4096x2160

NOTE: This library is optional. It is provided to accelerate preprocessing.

Preprocessing CPP files

This is the CPP Wrapper files that utilize the Preprocessor Binaries.

EdgeCortix MERA Wrapper CPP files

These files contain the application CPP API functions for loading and running the TVM.

TVM Application Devlopment

A summary of the TVM API implementation is DRP-AI TVM Application Development.

Implementation examples

AI Model Performance

The link below shows the performance of several AI Vision Models converted using the TVM and Tested on the RZV2MA. The TVM is capable of converting modesl from exported trained models in the standard ONNX format, pyTorch IMage Models TIMM, and Pytorch Class Models.

Official DRP-AI TVM Performance

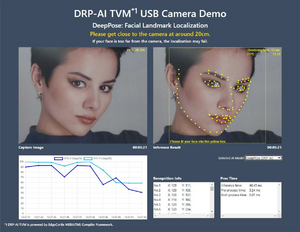

DRP-AI TVM Sample Applications

RZ/V Web Performance Application

This Application runs AI Algorithms, translated using the Renesas TVM on the RZ/V products. The image, AI output and performance are viewed on a web client ( i.e. PC browser ). Source code running on the RZ/V and web hosting software can be found in the link above. List of AI Models implemented for the demo can be found here.

This demo demonstrates how to write an application that implements a DRP-AI TVM generated model. The demo uses Resnet50.

DRP-AI TVM subgraph profiling ("DRP-AI" or "CPU" processing)

To analyze the subgraph processing of DRP-AI TVM please refer to the profiling guide.

The ONNX of the subgraphs assigned to DRP-AI are saved in the temp subdirectory after the AI model is compiled.